|

The steps of conducting a two-sample t-test are quite similar to those of the one-sample test. And for the sake of consistency, we will focus on another example dealing with birthweight and prenatal care. In this example, rather than comparing the birthweight of a group of infant to some national average, we will examine a program's effect by comparing the birthweights of babies born to women who participated in an intervention with the birthweights of a group that did not.

A comparison of this sort is very common in medicine and social science. To evaluate the effects of some intervention, program, or treatment, a group of subjects is divided into two groups. The group receiving the treatment to be evaluated is referred to as the treatment group, while those who do not are referred to as the control or comparison group. In this example, mothers who are part of the prenatal care program to reduce the likelihood of low birthweight is the treatment group, with a control group comprised of women who do not take part in the program.

Returning to the two-sample t-test, the steps to conduct the test are similar to those of the one-sample test.

Establish Hypotheses

The first step to examining this question is to establish the specific hypotheses we wish to In this case:

examine. Specifically, we want to establish a null hypothesis and an alternative hypothesis to be evaluated with data. Two Sample T-test Formula

Calculate Test Statistic

Calculation of the test statistic requires three components:

1. The average of both sample (observed averages)

Statistically, we represent these as

2. The standard deviation (SD) of both averages

Statistically, we represent these as

Results 1 - 48 of 3186 - 2011 2013 JEEP GRAND CHEROKEE CENTER DASH RADIO BEZEL TRIM W/AC CONTROL OEM. Condition is Used. Shipped with UPS. Find great deals on eBay for Jeep Grand Cherokee Radio Bezel in Dash Parts. Double Din NAV Radio Bezel Dash Install Kit Fits 2005-2007 Jeep Grand. 2011 2012 2013 JEEP GRAND CHEROKEE CENTER DASH RADIO BEZEL TRIM. 2005 jeep grand cherokee radio installation kit.

3. The number of observations in both populations, represented asFrom hospital records, we obtain the following values for these components:

Use This Value To Determine P-Value

Having calculated the t-statistic, compare the t-value with a standard table of t-values to determine whether the t-statistic reaches the threshold of statistical significance.

With a t-score so high, the p-value is 0.001, a score that forms our basis to reject the null hypothesis and conclude that the prenatal care program made a difference.

Idea and demo example

The idea of two sample t-test is to compare two population averages by comparing two independent samples. A common experiment design is to have a test and control conditions and then randomly assign a subject into either one. One variable to be measured and compared between two conditions (samples).

Suppose there is a study to compare two study methods and see how they improve the grades differently. There is a new method (treament, or t) and a standard method (control, or c). Users will be randomly assigned either one method. After they are trained with the method, their performance is measured as grades. The data set is “reading.csv”. The problem is to test whether the two methods make a difference? The model you can set up for this problem is

Grade (continuous) ~ method (categorical: 2 levels)

Open the data set from SAS.

Checking assumptions

Two sample t-test assumes that

When the assumptions are not met, other methods are possible based on the two samples:

In this demo example, two samples (control and treatment) are independent, and pass the Normality check. So we continue with two sample t-test. Note that the test is two-sided (sides=2), the significance level is 0.05, and the test is to compare the difference between two means (mu1 - mu2) against 0 (h0=0).

Compare two independent samples

Reading the output

Note that the results show both 'Pooled' and 'Satterthwaite' sections, which is based on sample variances check. The test on Equality of Variances is given at the end, and is repeated below,

Some people use the simple rule here:

In this example, the p-value = 0.0318 < 0.05, so we should read the 'Satterthwaite' section. For example

The conclusion is to reject the null hypothesis and that the the reading grade of two methods are significantly different.

Note that SAS perform a two-sided test, meaning the hypothesis is to compare a significant difference between two groups. If one wants to test whether one group is greater(smaller) than the other, p-value can be divided by 2. For example, the p-value/2=0.0141/2=0.007 < 0.05, hence the concluse for the one side test is to reject the hypothesis and therefore the new method improve the grading score.

We now consider an experimental design where we want to determine whether there is a difference between two groups within the population. For example, let’s suppose we want to test whether there is any difference between the effectiveness of a new drug for treating cancer. One approach is to create a random sample of 40 people, half of whom take the drug and half take a placebo. For this approach to give valid results it is important that people be assigned to each group at random. Such samples are independent.

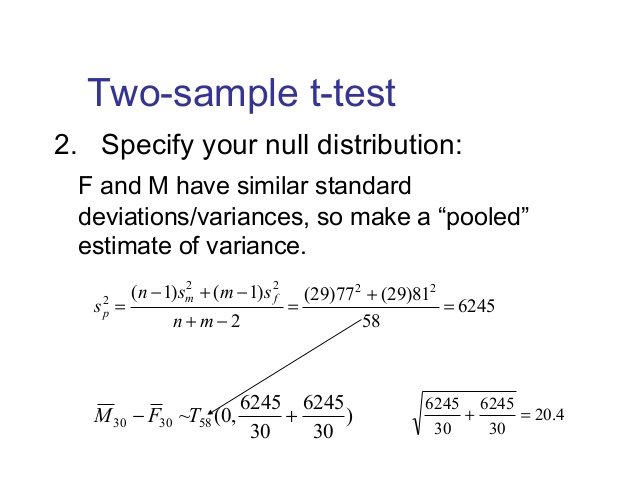

When the population variances are known, hypothesis testing can be done using a normal distribution, as described in Comparing Two Means when Variances are Known. But population variances are not usually known. The approach we use instead is to pool sample variances and use the t distribution.

We consider three cases where the t distribution is used:

We deal with the first of these cases in this section.

Theorem 1: Let x̄ and ȳ be the sample means of two sets of data of size nx and ny respectively. If x and y are normal, or nx and ny are sufficiently large for the Central Limit Theorem to hold, and x and y have the same variance, then the random variable

has distribution T(nx + ny – 2) where

Observation: s, as defined above, can be viewed as a way to pool sx and sy, and so s2is referred to as the pooled variance. Also note that the degrees of freedom of t is the value of the denominator of s2 in the formula given in Theorem 1.

Click here for a proof of Theorem 1.

Real Statistics Excel Functions: The following functions are provided in the Real Statistics Resource Pack.

VAR_POOLED(R1, R2) = pooled variance of the samples defined by ranges R1 and R2, i.e. s2 of Theorem 1

STDEV_POOLED(R1, R2) = pooled standard deviation of the samples defined by ranges R1 and R2, i.e. sof Theorem 1

STDERR_POOLED(R1, R2, b) = pooled standard error of the samples defined by ranges R1 and R2. This is equal to the denominator of t in Theorem 1 if b = TRUE (default) and equal to the denominator of t in Theorem 1 of Two Sample t Test with Unequal Variances if b = FALSE. When the sample sizes are equal, b = TRUE or b = FALSE yields the same result.

Observation: Each of these functions ignores all empty and non-numeric cells.

Example 1: A marketing research firm tests the effectiveness of a new flavoring for a leading beverage using a sample of 20 people, half of whom taste the beverage with the old flavoring and the other half who taste the beverage with the new favoring. The people in the study are then given a questionnaire which evaluates how enjoyable the beverage was. The scores are as in Figure 1. Determine whether there is a significant difference between the perception of the two flavorings.

Two Sample T Test Assumptions

Figure 1 – Data and box plot for Example 1

As we can see from the box plot in Figure 1 the data in each sample is reasonably symmetric and so we use the t test with the following null hypothesis:

H0: μ1 – μ2 = 0; i.e. there is no difference between the two flavorings

Since the sample variances are similar we decide that the population variances are also likely to be similar and so apply Theorem 1.

And so s = = 4.01. Now,

Since p-value = T.DIST.2T(t, df) = T.DIST.2T(2.18, 18) = .043 < .05 = α, we reject the null hypothesis, concluding that there is a significant difference between the two flavorings. In fact, the new flavoring is significantly more enjoyable.

The same result can be obtained by use of Excel’s Two-Sample Assuming Equal Variances data analysis tool, the results of which are as follows.

Figure 2 – Output from Excel’s data analysis tool

Observation: The Real Statistics Resource Pack also provides a data analysis tool which supports the two independent sample t test, but provides additional information not found in the standard Excel data analysis tool. Example 3 in Two Sample t Test: Unequal Variances gives an example of how to use this data analysis tool.

Example 2: To investigate the effect of a new hay fever drug on driving skills, a researcher studies 24 individuals with hay fever: 12 who have been taking the drug and 12 who have not. All participants then entered a simulator and were given a driving test which assigned a score to each driver as summarized in Figure 3.

Figure 3 – Sample data and histograms for Example 2

As in the previous example, we plan to use the t-test, but with a sample this small we first need to check to see that the data is normally distributed (or at least symmetric). This can be seen from the histograms. Also the variances are relatively similar (15.18 and 17.88) and so we can again use the t-Test: Two-Sample Assuming Equal Variances data analysis tool to test the following null hypothesis:

H0: μcontrol = μdrug Joel from the last of us.

Figure 4 – Two sample data analysis results

Since tobs = .10 < 2.07 = tcrit(or p-value = .921 > .05 = α) we retain the null hypothesis; i.e. we are 95% confident that any difference between the two groups is due to chance.

Observation: The t-test is quite robust even when the underlying distributions are not normal provided the sample size is sufficiently large (usually over 25 or 30). The t-test can be valid even with smaller sample sizes, provided the samples have similar shape and are not too skewed.

Two Sample T Test P ValueEffect size

The Cohen effect size d can be calculated as in One Sample t Test, namely:

This is approximated by

Example 3: Find the effect size for the study in Example 2.

This means that the control group has a driving score 4.1% of a standard deviation more than the group that is taking the hay fever medication. This is a very small effect.

As we saw in the one sample case (see One Sample t Test), this effect size statistic is biased, especially for small samples (n < 20). An unbiased estimator of the population effect size is given by Hedges’s effect size g.

Popcorn time on windows. where df = n1 + n2 – 2 and m = df/2.

Observation: Click here to see how to obtain a confidence interval for Cohen’s effect size.

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

- Blog

- New Page

- Home

- New Page

- Download Dreamweaver Cs5 Full Version

- Joel From The Last Of Us

- Windows 10 Choose What To Keep

- Download Mod Euro Truck Simulator 2 Indonesia

- Baixar Anime Chuunibyou Demo Koi Ga Shitai 2 Torrent

- Xcom 2 Ai Cheats

- Windows 10 Background Slideshow 10 Seconds

- Ex On The Beach

- Windows 7 Enterprise Etkinlestirme

- Mount And Blade Warband Sea Raiders

- The Sims 4 Mod Pack

- Minecraft In The Sea Modpack

- Ar-15 80 Lower Receiver

- Main Urra Mp3 Download

- Windows 10 Update Sleep

- Cle Activation Bitdefender Total Security 2019

- Gta 4 Els Controls

- Corel Motionstudio 3d Extratorrent

- Online Monster Manual 5e

RSS Feed

RSS Feed